Aspect oriented programming is one of those old new ideas that haven't really made a big impact (although perhaps it still will, research ideas sometimes take decades to appear in the professional world). The idea is really neat. We've had a few decades now to practice our modularity and the problem hasn't really been solved fully (the number of design patterns that have been invented I think is telling). What's different about AOP from just plain old "architecture" is the notion of "horizontal" composition. That is to say you don't solve the problem by decomposing and choosing your parts more carefully, you inject code into critical places instead. The technique is just as general, but I would suggest differently applicable.

I realized I haven't really explained anything yet, so let's look at a suitably contrived example.

A network manager

Suppose you're writing a network manager type of application (I actually tried that once). You might have a class called NetworkIface. And the class has an attribute ip. So how does ip get its value? Well, it can be set statically, or via dhcp. In the latter case there is a method dhcp_request, which requests an ip address and assigns to ip.

# <./main.py>

class NetworkIface(object):

def __init__(self):

self.ip = None

def dhcp_request(self):

self.ip = (10,0,0,131) # XXX magic goes here

if __name__ == '__main__':

iface = NetworkIface()

iface.ip = (10,0,0,1)

iface.ip = (10,0,0,2)

iface.dhcp_request()

Now suppose you are in the course of writing this application, and you need to do some debugging. It would be nice to know a few things about NetworkIface:

- The dhcp server seems to be assigning ip addresses to clients in a (possibly) erroneous manner. We'd like to keep a list of all the ips we've been assigned.

- Sometimes the time between making a dhcp request and getting a response seems longer than reasonable. We'd like to time the execution of the

dhcp_request method.

- Some users are reporting strange failures that we can't seem to reproduce. We would like to do exhaustive logging, ie. every method entry and exit, with parameters.

Now, this kind of debugging logic, however we realize it, is not really something we want in the release version of the application. It doesn't belong. It belongs in debug builds, and we're probably not going to need it permanently.

Here we will demo how to achieve the first point and omit the other two for brevity.

Where AOP comes in

Common to these issues is the fact that they all have to do with information gathering. But that's not necessarily the only thing we might want to do. We might want to tweak the behavior of dhcp_request for the purpose of debugging. For instance, if it took too long to get an ip, we could set one statically after some seconds. Again, that would be a temporary piece of logic not meant to be in the release version.

Now, AOP says "don't change your code, you'll only make a mess of it". Instead you can write that piece of code you need to write, but quite separately from your codebase. This you call an aspect, with the intention that it captures some aspect of behavior you want to inject into your code. And then, during compilation from source code to bytecode (or object code) you inject the aspect code where you want it to go. Compiler? Yes, AOP comes with a special compiler, which makes injection very toggable. Want vanilla code? Use the regular compiler. Want aspected code? Use the AOP compiler.

How does the compiler know where to inject the aspect code? AOP defines strategic injection points called join points. Exactly what these are depends on the programming language, but typically there is a join point preceding a method body, a join point preceding a method call, a method return and so on. (As we shall see, in aopy we are being more Pythonic.) Join points are defined by the AOP framework. But how do you tell it to inject there? With point cuts. A point cut is a matching string (ie. regular expression) which is matched against every join point and determines if injection happens there.

Back to you, John

Enough chatter, the code is getting cold! As it happens, Python has first rate facilities for writing AOP-ish code. We already have language features that can modify or add behavior to existing code:

- Properties let us micromanage assignment to/reading from instance variables.

- Decorators let us wrap function execution with additional logic, or even replace the original function with another.

- Metaclasses can do just about anything to a class by rebinding the class namespace arbitrarily.

We will use these language constructs as units of code injection, called advice in AOP. This way we can reuse all the decorators and metaclasses we already have and we can do AOP much the way we write code already. Let's see the aspects then.

A caching aspect

The first thing we wanted was to cache the values of ip. For this we have a pair of functions which will become methods in NetworkIface and make ip a property.

# <aspects/cache.py>

class Cache():

def __init__(self):

self.values = set()

self.value = None

cache = Cache()

def get(self):

return cache.value

def set(self, value):

if value:

print "c New value: %s" % str(value)

if any(cache.values):

prev = ", ".join([str(val) for val in cache.values])

print "c Previous values: %s" % prev

if value:

cache.values = cache.values.union([value])

cache.value = value

Cache is the helper class that will store all the values.

A spec

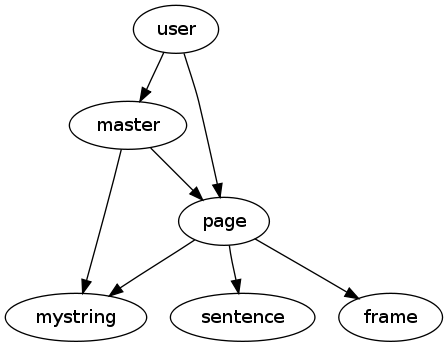

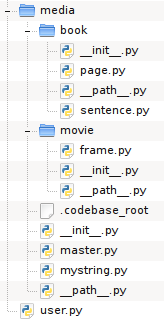

Aspects are defined in specification files which provide the actual link between the codebase and the aspect code.

# <./spec.py>

import aopy

import aspects.cache

caching_aspect = aopy.Aspect()

caching_aspect.add_property('main:NetworkIface/ip',

fget=aspects.cache.get, fset=aspects.cache.set)

__all__ = ['caching_aspect']

We start by importing the aopy library and the aspect code we've written. Then we create an Aspect instance and call add_property to add a property advice to this aspect. The first argument is the point cut, ie. the matching string which defines what this property is to be applied to. Here we say "in a module called main, in a class called NetworkIface, find a member called ip". The other two arguments provide the two functions we wish to use in this property.

Compiling

To compile the aspect into the codebase we run the compiler, giving the spec file. And we give it a module (or a path) that indicates the codebase.

$ aopyc -t spec.py main.py

Transforming module /home/alex/uu/colloq/aopy/code/main.py

Pattern matched: main:NetworkIface/ip on main:NetworkIface/ip

The compiler will examine all the modules in the codebase (in this case only main.py) and attempt code injection in each one. Whenever a point cut matches, injection happens. The transformed module is then compiled to bytecode and written to disk (as main.pyc).

main.pyc now looks like this:

# <./main.py> transformed

import sys ### <-- injected

for path in ('.'): ### <-- injected

if path not in sys.path: ### <-- injected

sys.path.append(path) ### <-- injected

import aspects.cache as cache ### <-- injected

class NetworkIface(object):

def __init__(self):

self.ip = None

def dhcp_request(self):

self.ip = (10,0,0,131) # XXX magic goes here

ip = property(fget=cache.get, fset=cache.set) ### <-- injected

if __name__ == '__main__':

iface = NetworkIface()

iface.ip = (10,0,0,1)

iface.ip = (10,0,0,2)

iface.dhcp_request()

Injected lines are marked. First we find some import statements that are meant to ensure that the codebase can find the aspect code on disk. Then we import the actual aspect module that holds our advice. And finally we can ascertain that NetworkIface has gained a property, with get and set methods pulled in from our aspect code.

Running aspected

When we now run main.pyc we get a message every time ip gets a new value. We also get a printout of all the previous values.

c New value: (10, 0, 0, 1)

c New value: (10, 0, 0, 2)

c Previous values: (10, 0, 0, 1)

c New value: (10, 0, 0, 131)

c Previous values: (10, 0, 0, 1), (10, 0, 0, 2)

And the yet the codebase has not been touched, if we execute main.py instead we find the original code.

Here the show endeth

And that wraps up a hasty introduction to AOP with aopy. There is a lot more to be said, both about AOP in Python and aopy in particular. Interested parties are kindly directed to these two papers:

- Strategies for aspect oriented programming in Python

- aopy: A program transformation-based aspect oriented framework for Python

If you prefer reading code rather than English (variable names are still in English though, sorry about that), here is the repo for your pleasure:

And if you still have no idea what AOP is and think the whole thing is bogus then you can watch this google talk (and who doesn't love a google talk!) by mr. AOP himself.

April 25th, 2010

April 25th, 2010