Most people are reasonably discreet by nature. They don't feel an urge to flaunt their personality or draw attention to themselves that often. It's a fact of life that we live in an unfriendly world, amongst aggressive peers. If you stick your neck out more then you'll have to stand up for yourself more. Thus most people develop a (healthy? at least in terms of survival) tendency to not advertise themselves excessively, especially not facts they suspect their peers will consider weaknesses. And so when they find themselves in a classroom with 24 peers, they feel somewhat less than eager to declare ignorance about the current topic. Teachers know this, and they think it's unfortunate that the fear of embarrassment keeps people from learning. And this is when they declare that: There is no such thing as a stupid question!

This is a well meaning encouragement to dare to admit that you're ignorant, because in this room you're allowed to be. Unfortunately, it's also a misleading statement. If you've ever taken a class with a person who wasn't shy, but *did* ask a lot of stupid questions, you already know that a) there *is* such a thing as a stupid question and b) it is ill advised to keep asking them. A person who is either particularly ignorant or exceptionally obtuse is a real disruption to the thought process among people who can follow the material. Just in the same way that you wouldn't want someone to interrupt a movie every 5 minutes and spend 2 minutes explaining what just happened on the screen, it really destroys the flow.

The teacher is probably more tolerant of stupid questions than your peers are, but there is a limit to how much time can be spent explaining obvious things to an ignoramus, because after all the mission is to get through all of today's material. So stupid questions are obviously not appropriate in large quantities, whatever the commercial says.

Interestingly, the expression isn't it's okay to ask stupid questions, so no allowance is made for those questions at all. On the contrary, it redefines all questions to be of the not-stupid nature. Perhaps we should call them "smart questions". The not-stupid reader will notice that the result between that and admitting stupid questions is ultimately the same. Whether you're allowed to ask stupid questions, or you're not allowed to, but there are not stupid questions, it is permission granted to ask all questions. And the strange twist is just a little morale boost for you, an encouragement. We allow stupid questions, but your questions aren't stupid anyway, so don't worry about that. *wink*

As bogus as the expression is, is there any truth to it at all? It defines "stupid questions", some category that apparently must have been discovered by someone. If stupid questions form a subset of all questions, there must be another category that isn't stupid. So is it actually true that it's impossible to distinguish a presumably stupid question from a smart question? Why, that too is completely untrue, anyone who took the class with that stupid-questions-asker knows this.

So we know that a) stupid questions exist and b) you shouldn't be asking them. But here's the problem: how do you know if your question is stupid?

It seems to me that there is no general answer. If we take the literal definition of the word "stupid" we find:

characterized by or proceeding from mental dullness; foolish; senseless: a stupid question.

However, none of these assessments - dullness, foolishness, senselessness - are absolute terms. They take on meaning in context, and only then. So in other words, if you are a globally renowned expert in some field and you receive questions from people all around the world, people whose background you know nothing about, and with whom you've never interacted before, then none of those questions, no matter how elementary, can be stupid. Because it's impossible to infer "mental dullness", or "foolishness", or "senselessness" based on one question.

Wherever you have a congregation of two persons or more the accepted standard of discourse on any topic that comes up is decided within a few minutes, as soon as the participants negotiate an acceptable place to set the bar. Just how this happens is too complicated to cover here, but it's influenced by things like how socially dominant the various participants are, what they stand to win or lose by admitting to competence or ignorance and so on. However, once that standard has been informally negotiated, any questions visibly below the standard will be perceived as stupid.

Although the audience makes an instant determination about a question being asked, this isn't actually a correct assessment. Broadly speaking (although this departs somewhat from the dictionary definition), stupid questions can be divided into two categories.

First, there are ignorant questions, which betray a lack of competence about the topic. This is just an indication that the person doesn't have the same background as everyone else. This is actually less of a failing for the person in question, because you can't really blame someone for not knowing something they haven't had the opportunity to learn, can you? But it's still very disruptive to everyone else.

Second, there are questions that are by definition stupid. "Mental dullness" would be a failing to make the right deductions based on the known facts. So a prior fact "this chair is heavy", combined with a new fact "heavy things hurt when dropped on your foot" would make the question "what happens if I drop this chair on my foot" a stupid question. It would also be a foolish idea, which seems to me as being "mental dullness" in a case where the outcome is unfavorable to you personally. But you could also ask a different question. "But what if this happened on a Tuesday, would it still hurt?" That question makes no sense. It seems to me that "senseless" questions stem from a false conclusion somewhere in deduction, ie. that the day of the week has an impact on your physiological responses.

While questions due to ignorance are an obvious waste of time (depending on the degree of ignorance), questions due to "mental dullness" are socially accepted to a point. The real problem is that the assessment isn't accurate.

To determine whether a person is:

lacking ordinary quickness and keenness of mind

we would have to compare his performance to that of another person. In other words, given the same facts, will the dull person fail to make the deduction while the other succeeds? If so, a question that betrays the absence of this deduction would correctly be described as stupid.

But how to conduct such an experiment? People gather in a classroom from all corners of the city (just to keep it simple). If they attended different schools they would not have had the same curriculum. But even two persons with the exact same schooling does not guarantee that they will have absorbed the same facts. Perhaps one was paying attention while the other didn't, perhaps one was gone that day, perhaps one remembers this fact and the other doesn't, perhaps one never understood it while the other did. Memory tests conducted with groups of participants show that a 30 minute exposure to the same words, images etc produces vastly different recollections of what was seen.

So if we cannot stage such an experiment then we cannot infer dullness of mind, and hence the determination of the stupid question is undecided.

So it cannot be decided from the outside, but the person cannot decide this either. You can ask yourself the question "if there some basic fact that makes this question stupid that I'm not aware of?". If so, it will be judged a stupid question, but it's not stupid based on *your* known facts. And it's only after you've understood the topic that you can determine if it was stupid. If it turns out you were missing necessary information, then it wasn't stupid. If you weren't missing anything, then it would seem you mind was "dull". However, if you have a "dull mind" to begin with, then perhaps you see no anomaly in your performance that day.

The senseless question is an interesting case, because it originates from a false conclusion. What are we to make of this? Is it because you misinterpreted a fact and thus made a wrong turn, or did you have all the facts straight, but still somehow managed to deduce the wrong thing? That brings up the question of whether the mind is capable of making an incorrect deduction like that. Or whether you're guaranteed, having all the right facts, to produce the right answer. That is a common assumption we make when debating with people. We think just as long as we straighted out their warped world view, we can get them thinking straight.

So you can't tell if the question is stupid, and the audience doesn't know if it's stupid, even if it's obvious to them. Maybe that's why someone got really depressed and went into denial, postulating that there are no stupid questions. I guess that means there are no foolish or senseless questions.

May 2nd, 2008

May 2nd, 2008

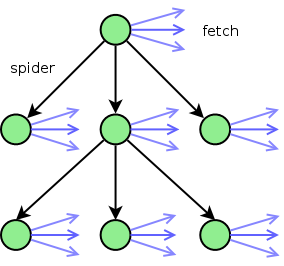

Starting at the url to be spidered (the top green node), we spider the page for urls. For each of the urls found, it ends up in one of three categories:

Starting at the url to be spidered (the top green node), we spider the page for urls. For each of the urls found, it ends up in one of three categories: