The thing about a project like Newman is that it's basically impossible to make it work perfectly. It has a difficult job, because there are so many potential sources of error. Servers may go offline, connections may fail, article formats may change and so on. It is as good as impossible to guarantee that Newman will do the right thing, because at the end of the day we are trying to analyze text, and computers are not good at doing that. Just look at spam filters - they have been improved upon for years, but everyone is still getting spam. Much less than before, of course, so the filters are definitely useful. And Newman too makes mistakes, but it does still succeed quite often.

Newman has been posting on Xtratime.org under the username Carsonne, a French female impersonator of Carson35's it would seem. :D Carsonne averages about 15 posts a day since July 30, that is a little over 350 posts in all, 350+ news stories posted. While I haven't been keeping score to present statistical numbers, I have kept a close eye on Carsonne and I would estimate that upwards of 90% of the stories posted were correctly parsed, formatted and classified. In fact, I recall about 10-15 misposts of the ones I've seen (which I think is most). And that is an error rate no human poster would have, Carsonne at an estimated 95% success rate is at least an order of magnitude below a human poster (ie. I would claim that a human poster would have a >99.5% success rate at copy/pasting and classifying stories - less than 2 misposts in 350).

What about user input, then? Well, unfortunately Newman does present a certain configuration cost, not everything can be automated. In particular, finding channels is something that would be wonderful to automate, given how quickly the forum climate changes. Newman also requires that sources be configured (and if need be - updated) for the parsing to work. Of course, once that is in place, Newman can post at will. So that is still quite a limited set of abilities.

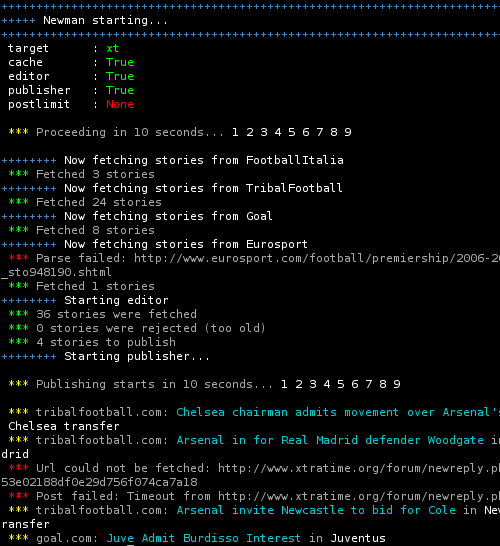

The screenshot below shows a typical run of Newman. Quite a few stories were fetched, some were selected for posting, and then posted. It also shows how Newman is fault reliant - a parsing error was handled gracefully, as was a timeout from the forum web server.

After 20+ days on the forum, Carsonne has been active long enough to stir up some reactions about "her" ;) posting of news. Carson's long tenure has paved the way for posters like this, so Carsonne is seen by most as just another compulsive news poster. "She" has taken some heat over posting news in the wrong place (wrong classification), but beyond that it has been no worse than Carson gets daily.

So what have we learnt?

As it often is, it seems that Project Newman has yielded more questions than the number of answers it has given. Sure enough, it isn't too hard to automate posting on a forum, it isn't too hard to fetch stories from the web and parse them, it certainly isn't hard to automate this out of any human's ability to keep up. But it is hard to decide what text means, it is hard to decide which story is relevant to what thread, it is hard to decide whether a word in a sentence is a name and so on.

The question is just how to do these things in a reliable way?

Thus endeth Project Newman. Download the code from the code page if you're interested.

This entry is part of the series Project Newman.

August 29th, 2006

August 29th, 2006