That's a question that's been bugging me for months now. It's so vexing to try to find something out and not getting it. All the more so when you look it up in a couple of different places and the answers don't seem to have much to do with each other. Obviously, once you have the big picture, all those answers intersect in a meaningful place, but while you're still hunting for it, that's not helpful at all.

I put this question to a wizard and the answer was (not an exact quote):

A function whose free variables have been bound.

Don't you love to get a definition in terms of other terms you're not particularly comfortable with? Just like a math textbook. This answer confused me, because I couldn't think of a case that I had seen where that wasn't the case, so I thought I must be missing something. The Python answer is very simple:

A nested function.

It's sad, but one good answer is enough. When you can't get that, sometimes you end up stacking up several unclear answers and hoping you can piece it all together. And that can very well fail.

I read a definition today that finally made it clear to me. It's not the simplest and far from the most intuitive description. In fact, it too reads like a math textbook. But it's simply what I needed to hear in words that would speak to me.

A lexical closure, often referred to just as a closure, is a function that can refer to and alter the values of bindings established by binding forms that textually include the function definition.

I read it about 3 times, forwards and backwards, carefully making sure that as I was lining up all the pieces in my mind, they were all in agreement with each other. And once I verified that, and double checked it, I felt so relieved. Finally!

I can't follow the Common Lisp example that follows on that page, but scroll down and you find a piece of code that is much simpler.

(define (foo x)

(define (bar y)

(+ x y))

bar)

(foo 1) 5 => 6

(foo 2) 5 => 7

What's going on here? First there is a function being defined. Its name is foo and it takes a parameter x. Now, once we enter the body of this function foo, straight away we have another function definition - a nested function. This inner function is called bar and takes a parameter y. Then comes the body of the function bar, which says "add variables x and y". And then? Follow the indentation (or the parentheses). We have now exited the function definition of bar and we're back in the body of foo, which says "the value bar", so that's the return value of foo: the function bar.

In this example, bar is the closure. Just for a second, look back at how bar is defined in isolation, don't look at the other code. It adds two variables: y, which is the formal parameter to bar, and x. How does x receive its value? It doesn't. Not inside of bar! But if you look at foo in its entirety, you see that x is the formal parameter to foo. Aha! So the value of x, which is set inside of foo, carries through to the inner function bar.

Can we square this code with the answers quoted earlier? Let's try.

A function whose free variables have been bound. - A function, in this case bar. Free variables, in this case x. Bound, in this case defined as the formal parameter x to the function foo.

A nested function. - The function bar.

A lexical closure, often referred to just as a closure, is a function that can refer to and alter the values of bindings established by binding forms that textually include the function definition. - A function, in this case bar. That can refer to and alter, in this case bar refers to the variable x. values of bindings, in this case the value of the bound variable x. established by binding forms, in this case the body of the function foo. that textually include the function definition, in this case foo includes the function definition of bar.

So yes, they all make sense. If you understand what it's all about. :/

Let's return to the code example. We now call the function foo with argument 1. As we enter foo, x is bound to 1. We now define the function bar and return it, because that is the return value of foo. So now we have the function bar, which takes one argument. We give it the argument 5. As we enter bar, y is bound to 5. And x? Is it an undefined argument, since it's not defined inside bar? No, it's bound *from before*, from when foo was called. So now we add x and y.

In the second call, we call foo with a different argument, thus x inside of bar receives a different value, and once the call to bar is made, this is reflected in the return value.

Well, that was easy. And to think I had to wait so long to clarify such a simple idiom. So what is all the noise about anyway? Think of it as a way to split up the assignment of variables. Suppose you don't want to assign x and y at the same time, because y is a "more dynamic" variable whose value will be determined later. Meanwhile, x is a variable you can assign early, because you know it's not going to need to be changed.

So each time you call foo, you get a version of bar that has a value of x already set. In fact, from this point on, for as long as you use this version of bar, you can think of x as a constant that has the value that it was assigned when foo was called. You can now give this version of bar to someone and they can use it by passing in any value for y that they want. But x is already determined and can't be changed.

April 30th, 2008

April 30th, 2008

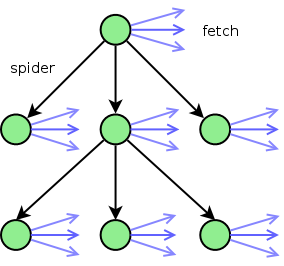

Starting at the url to be spidered (the top green node), we spider the page for urls. For each of the urls found, it ends up in one of three categories:

Starting at the url to be spidered (the top green node), we spider the page for urls. For each of the urls found, it ends up in one of three categories: