Network connections fail all the time, we've all been there. There are so many things that can go wrong, the network adapter driver can fail, the dhcp server can revoke the lease, the wifi router can disappear, the routing may be wrong at some point along the line, the dns server can be overloaded, or the remote host may be down. Those are some of the possibilities, and it can be quite a pain to track down the problem.

But the first thing to do is to figure out exactly what is working and what isn't. If you know that much then at least you know where to start. My goal here is to create a fairly simple test to examine the status of the network connection, leading up to a working internet connection. One constraint that I have is that I like it to be portable, so that I can carry it around along with my dotfiles. That means I would like it to work in any location just as long as I can get a shell, it should not require any dependencies.

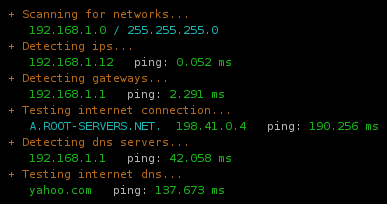

A fully functional network connection looks like this:

What I do is try to detect the parameters of the network step by step, using the regular tools like route, ifconfig. Once I know what the hosts are, I do a ping. Now, a ping obviously isn't a foolproof test; if you're on a network that doesn't allow outgoing icmp then it's entirely possible that you can tcp out anyway. So what you really should do is tcp on port 80, not ping. But ping is extremely portable, whereas doing a tcp/udp probe is asking a lot more from the environment, needing something like nmap or hping.

Once you've established that the connection is working, and you want to know more about the network, you can go further with something like netscan.

The code is relatively stupid and messy, but that's the way bash is.

# Author: Martin Matusiak <numerodix@gmail.com>

# Licensed under the GNU Public License, version 3

function havenet {

local init="$(toolsinit)"

platforminit

case "$PLATFORM" in

"Linux")

echo "Platform: $PLATFORM"

run_havenet "$init";;

*)

echo "Platform: $PLATFORM (local network detection not supported)"

run_haveinet "$init";;

esac

}

function haveinet {

local init="$(toolsinit)"

platforminit

echo "Platform: $PLATFORM"

run_haveinet "$init"

}

function platforminit {

if $(which uname &>/dev/null); then

PLATFORM=$(uname 2>/dev/null)

fi

}

function toolsinit {

local tools="true"

local missing=()

local cmds=(/sbin/route /sbin/ifconfig ping nmap)

local args=(" -n" "" " -c1 -W2" " -T3")

local names=(route ifconfig ping nmap)

local i=-1

while [ $i -lt $(( ${#names[@]} - 1 )) ]; do i=$(( $i+1 ))

if [ 0 -eq $(which ${cmds[$i]} &>/dev/null ; echo $?) ]; then

tools="$tools;local ${names[$i]}=\"${cmds[$i]}${args[$i]}\""

else

missing[${#missing[@]}]="${cmds[$i]}"

fi

done

if [ ${#missing[@]} -gt 0 ]; then

echo -e "Missing tools: ${cred}${missing[*]}${creset}" 1>&2

fi

echo "$tools"

}

function run_havenet {

eval "$@"

local localranges="169.254 10 172.(1[6-9]|2[0-9]|3[0-1]) 192.168"

### Scan networks

echo -e "${cyellow} + Scanning for networks...${creset}"

test=$($route 2>/dev/null | egrep "^[1-9]")

if [[ $? != 0 ]]; then

echo -e " ${cred}none found${creset}"

else

local nets=$(echo "$test" | sort | awk '{ print $1 }')

for net in $nets; do

local gw=$($route 2>/dev/null | egrep "^$net" | awk '{ print $3 }')

printf " ${cgreen}%-15s${creset} ${ccyan}%s${creset}\n" "$net" "/ $gw"

done

### Detect ips

local ips=

for net in $nets; do

local r=$(echo $net | sed "s/.0$//g" | sed "s/.0$//g" | sed "s/.0$//g")

local ip=$($ifconfig 2>/dev/null | grep "inet addr:$r" | sed "s/inet addr:\([0-9.]*\).*$/\1/g")

ips="$ip $ips"

done

echo -e "${cyellow} + Detecting ips...${creset}"

test=$(echo "$ips" | egrep -v "^[ ]+$")

if [[ $? != 0 ]]; then

echo -e " ${cred}none found${creset}"

else

local on_lan=1

for ip in $ips; do

printf " ${cgreen}%-15s${creset} %s" "$ip" "ping: "

test=$($ping $ip 2>/dev/null)

if [[ $? != 0 ]]; then

echo -e "${cred}failed${creset}"

else

local t=$(echo "$test" | grep "min/avg" | sed "s/.*= \([0-9.]*\)\/.*$/\1/g")

echo -e "${cgreen}$t ms${creset}"

fi

### Test ips for lan

local ip_on_lan=0

for prefix in $localranges; do

test=$(echo "$ip" | egrep "^$prefix" 2>/dev/null)

if [[ $? == 0 ]]; then

ip_on_lan=1

fi

done

on_lan=$(( $on_lan & $ip_on_lan ))

done

if [ $on_lan -eq 1 ]; then

### Detect gateways if on lan

echo -e "${cyellow} + Detecting gateways (network is local)...${creset}"

test=$($route 2>/dev/null | grep UG)

if [[ $? != 0 ]]; then

echo -e " ${cred}none found${creset}"

else

local gws=$(echo "$test" | awk '{ print $2 }')

for gw in $gws; do

printf " ${cgreen}%-15s${creset} %s" "$gw" "ping: "

test=$($ping $gw 2>/dev/null)

if [[ $? != 0 ]]; then

echo -e "${cred}failed${creset}"

else

local t=$(echo "$test" | grep "min/avg" | sed "s/.*= \([0-9.]*\)\/.*$/\1/g")

echo -e "${cgreen}$t ms${creset}"

fi

done

### Try inet connection if we have a gateway

run_haveinet "$@"

fi

else

### Try inet connection, we're on wan

run_haveinet "$@"

fi

fi

fi

}

function run_haveinet {

eval "$@"

local rootname="A.ROOT-SERVERS.NET."

local rootip="198.41.0.4"

local dnsport="53"

local inethosts="yahoo.com google.com"

local inetport="80"

### Test inet connection

echo -e "${cyellow} + Testing internet connection...${creset}"

echo -en " ${ccyan}$rootname ${cgreen}$rootip${creset} ping: "

test=$($ping $rootip 2>/dev/null)

if [[ $? != 0 ]]; then

echo -e "${cred}failed${creset}"

else

local t=$(echo "$test" | grep "min/avg" | sed "s/.*= \([0-9.]*\)\/.*$/\1/g")

echo -e "${cgreen}$t ms${creset}"

fi

### Detect dns

echo -e "${cyellow} + Detecting dns servers...${creset}"

test=$(cat /etc/resolv.conf 2>/dev/null | grep ^nameserver)

if [[ $? != 0 ]]; then

echo -e " ${cred}none found${creset}"

else

local dnss=$(echo "$test" | awk '{ print $2 }')

for dns in $dnss; do

printf " ${cgreen}%-15s${creset} %s" "$dns" "ping: "

test=$($ping $dns 2>/dev/null)

if [[ $? != 0 ]]; then

echo -en "${cred}failed${creset}"

else

local t=$(echo "$test" | grep "min/avg" | sed "s/.*= \([0-9.]*\)\/.*$/\1/g")

echo -en "${cgreen}$t ms${creset}"

fi

local proto="tcp" ; local udpflags=""

if [[ `whoami` = "root" ]]; then

proto="udp"; udpflags="-sU -PN"

fi

echo -en " dns/$proto: "

test=$($nmap $dns $udpflags -p $dnsport 2>/dev/null)

if ! echo "$test" | grep "$dnsport/$proto" | grep "open" &>/dev/null; then

echo -e "${cred}failed${creset}"

else

local t=$(echo "$test" | grep "scanned in" | sed "s/^.*in \([0-9.]*\) seconds.*$/\1/g")

t=$(echo $t*1000 | bc)

echo -e "${cgreen}$t ms${creset}"

fi

done

fi

### Test inet dns

echo -e "${cyellow} + Testing internet dns...${creset}"

for inethost in $inethosts; do

printf " ${cgreen}%-15s${creset} %s" "$inethost" "ping: "

test=$($ping $inethost 2>/dev/null)

if [[ $? != 0 ]]; then

echo -en "${cred}failed${creset}"

else

local t=$(echo "$test" | grep "min/avg" | sed "s/.*= \([0-9.]*\)\/.*$/\1/g")

echo -en "${cgreen}$t ms${creset}"

fi

echo -en " http: "

test=$($nmap $inethost -p $inetport 2>/dev/null)

if ! echo "$test" | grep "$inetport/tcp" | grep "open" &>/dev/null; then

echo -e "${cred}failed${creset}"

else

local t=$(echo "$test" | grep "scanned in" | sed "s/^.*in \([0-9.]*\) seconds.*$/\1/g")

t=$(echo $t*1000 | bc)

echo -e "${cgreen}$t ms${creset}"

fi

done

}

UPDATE: Replacing with newer version that is a bit more clever.

UPDATE2: Added tool detection and platform detection.

November 17th, 2008

November 17th, 2008