If you're new here I recommend starting with part 1.

Last time we looked at statements inside function bodies. Exciting stuff, but a bit puzzling. I bet you were asking yourself: How did those functions get into that module in the first place?

Statements at module level

For some reason dis.dis does not seem to produce any output when given a module object other than showing you its functions. For a more insightful view we will use python -m dis module.py.

Let's try a really simple one first.

a = 7

b = a + a

# disassembly

1 0 LOAD_CONST 0 (7)

3 STORE_NAME 0 (a)

2 6 LOAD_NAME 0 (a)

9 LOAD_NAME 0 (a)

12 BINARY_ADD

13 STORE_NAME 1 (b)

16 LOAD_CONST 1 (None)

19 RETURN_VALUE

The first thing to notice is the use of LOAD_NAME and STORE_NAME. We're at module level here, and LOAD_FAST/STORE_FAST only apply to local variables in a function body. But just like a function object a module stores the names it contains in a tuple which can be indexed into, as shown here.

It's a bit more obscure to get at that storage, because a module does not have a func_code attribute attached to it like a function does. But we can create a module object ourselves and see what it contains:

code = compile("a = 7; b = a + a", "module.py", "exec")

print code.co_names

# ('a', 'b')

And there's our storage. The module has various other co_* attributes which we won't go into right now.

Also worth noting: modules return None like functions do, which seems a bit redundant given that there isn't a way to capture that return value: value = import os is not valid syntax. And module imports feel like statements, not like expressions.

Functions

Think about this first: when you import a module which contains two functions, what do you expect its namespace to contain? Those two names bound to their function objects, of course! See, that was not a trick question!

def plus(a, b):

return a + b

def main():

print plus(2, 3)

# disassembly

1 0 LOAD_CONST 0 (<code object plus at 0xb73f4920, file "funcs.py", line 1>)

3 MAKE_FUNCTION 0

6 STORE_NAME 0 (plus)

4 9 LOAD_CONST 1 (<code object main at 0xb73f47b8, file "funcs.py", line 4>)

12 MAKE_FUNCTION 0

15 STORE_NAME 1 (main)

18 LOAD_CONST 2 (None)

21 RETURN_VALUE

And so it is. Python will not give any special treatment to functions over other things like integers. A function definition (in source code) becomes a function object, and its body becomes a code object attached to that function object.

We see in this output that the code object is available. It's loaded onto the stack with LOAD_CONST just like an integer would be. MAKE_FUNCTION will wrap that in a function object. And STORE_NAME simply binds the function object to the name we gave the function.

There is so little going on here that it's almost eerie. But what if the function body makes no sense?? What if it uses names that are not defined?!? Recall that Python is a dynamic language, and that dynamism is expressed by the fact that we don't care about the function body until someone calls the function! It could literally be anything (as long as it can be compiled successfully to bytecode).

It's enough that the function object knows what arguments it expects, and that it has a compiled function body ready to execute. That's all the preparation we need for that function call. No, really!

Classes

Classes are much like functions, just a bit more complicated.

class Person(object):

def how_old(self):

return 5

# disassembly

1 0 LOAD_CONST 0 ('Person')

3 LOAD_NAME 0 (object)

6 BUILD_TUPLE 1

9 LOAD_CONST 1 (<code object Person at 0xb74987b8, file "funcs.py", line 1>)

12 MAKE_FUNCTION 0

15 CALL_FUNCTION 0

18 BUILD_CLASS

19 STORE_NAME 1 (Person)

22 LOAD_CONST 2 (None)

25 RETURN_VALUE

Let's start in the middle this time, at location 9. The code object for the entire class has been compiled, just like the function we saw before. At module level we have no visibility into this class, all we have is this single code object.

With MAKE_FUNCTION we wrap it in a function object and then call that function using CALL_FUNCTION. This will return something, but we can't tell from the bytecode what kind of object that is. Not to worry, we'll figure this out somehow.

What we know is that we have some object at the top of the stack. Just below that we have a tuple of the base classes for our new class. And below that we have the name we want to give to the class. With all those lined up, we call BUILD_CLASS.

If we peek at the documentation we can find out that BUILD_CLASS takes three arguments, the first being a dictionary of methods. So: methods_dict, bases, name. This looks pretty familiar - it's the same inputs needed for the __new__ method in a metaclass! At this point it would not be outlandish to suspect that the __new__ method is being called behind the scenes when this opcode is being executed.

August 8th, 2013

August 8th, 2013

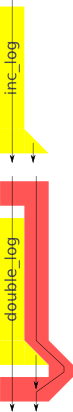

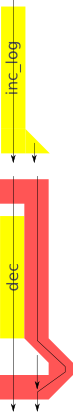

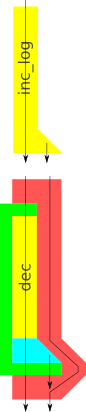

So how can we solve this? It's not that hard. If you look at the diagram you see that

So how can we solve this? It's not that hard. If you look at the diagram you see that  So far so good, but what if we now we want to mix logging functions with primitive functions in our pipeline?

So far so good, but what if we now we want to mix logging functions with primitive functions in our pipeline? Yes, just like that! Except that now we have two functions

Yes, just like that! Except that now we have two functions