One thing that's awesome in python is having a small codebase that can fit in a single directory. It's a comfy setting, everything is right there at your fingertips, no directory traversal needed to get a hold of a file.

Flat structure

Let's check out one right now:

./frame.py

./master.py

./mystring.py

./page.py

./sentence.py

./user.py

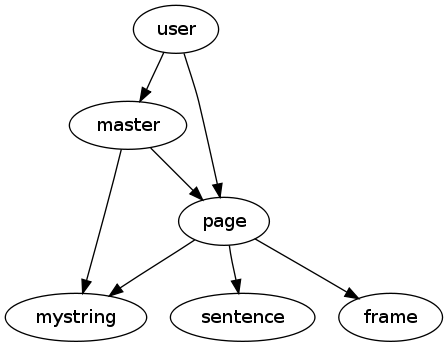

And here's the import relationship between them:

Easy, straightforward. I can execute any one of the files by itself to make sure the syntax is correct or to run an "if __main__" style unit test on it.

Tree structure

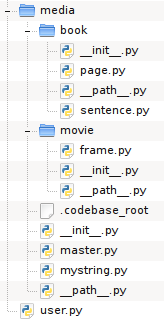

But suppose the codebase is expanding and I decide I have to get a bit more structured? I devise a directory structure like this:

./media/book/__init__.py

./media/book/page.py

./media/book/sentence.py

./media/__init__.py

./media/master.py

./media/movie/frame.py

./media/movie/__init__.py

./media/mystring.py

./user.py

The same files, but now with __init__.py files all over the codebase to tell python to treat each directory as a package. And now my import statements have to be changed too, let's see master:

# from:

import mystring

import page

# to:

import media.mystring

import media.book.page

Nice one. Okay, let's see how this works now:

$ python user.py

page says hello!

sentence says hello!

frame says hello!

mystring says hello!

master says hello!

user imports page and then master. The first 4 lines are due to page, which imports three modules, and finally we see master arriving at the scene. All the files it imports have already been imported, so python doesn't redo those. Everything is in order.

As you can see, imports between modules in the tree work out just fine, page finds both the local sentence and the distant frame.

But if we run master it's a different story:

$ python media/master.py

master says hello!

Traceback (most recent call last):

File "media/master.py", line 3, in <module>

import media.mystring

ImportError: No module named media.mystring

And it doesn't actually matter if we run master from media/ or run media/master from ., it's the same result. And it's the same story with page, which is deeper in the tree.

These modules, which used to be executable standalone, no longer are. :(

A hackish solution

So we need something. The nature of the problem is that once we traverse into media/, python no longer can see that there is a package called media, because it's not found anywhere on sys.path. What if we could tell it?

The problem pops up when the module is being executed directly, in fact when __name__ == '__main__'. So this is the case in which we need to do something differently.

Here's the idea. We put a file in the root directory of the codebase, a file we can find that marks where the root is. Then, whenever we need to find the root, we traverse up the tree until we find it. The file is called .codebase_root. And for our special when-executed logic, we use a file called __path__ that we import conditionally. Here's what it looks like:

import os

import sys

def find_codebase(mypath, codebase_rootfile):

root, branch = mypath, 'nonempty'

while branch:

if os.path.exists(os.path.join(root, codebase_rootfile)):

codebase_root = os.path.dirname(root)

return codebase_root

root, branch = os.path.split(root)

def main(codebase_rootfile):

thisfile = os.path.abspath(sys.modules[__name__].__file__)

mypath = os.path.dirname(thisfile)

codebase_root = find_codebase(mypath, codebase_rootfile)

if codebase_root:

if codebase_root not in sys.path:

sys.path.insert(0, codebase_root)

codebase_rootfile = '.codebase_root'

main(codebase_rootfile)

So now, when we find ourselves in a module that's somewhere inside the media/ package, we have this bit of special handling:

print "master says hello!"

if __name__ == '__main__':

import __path__

import media.mystring

import media.book.page

Unfortunately, importing __path__ unconditionally breaks the case where the file is not being executed directly and I haven't been able to figure out why, so it has to be done like this. :/

You end up with a tree looking as you can see in the screenshot.

You end up with a tree looking as you can see in the screenshot.

I've pushed the example to Github so by all means have a look:

We pass the test, all the modules are executable standalone again. But I can't say that it's awesome to have to do it like this.

April 13th, 2010

April 13th, 2010

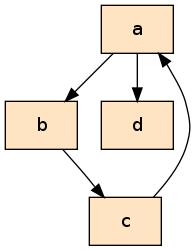

Graphs, they are fun. You can use them for all sorts of things, like drawing a picture of the internet (or your favorite regular expression!)

Graphs, they are fun. You can use them for all sorts of things, like drawing a picture of the internet (or your favorite regular expression!)