I discovered Perl in the old millennium. I was building websites and I found out that HTML isn't Turing complete. Content and layout was cool, but it didn't really *do* anything. That's when I heard about CGI. I would find Perl scripts on sites that don't even exist anymore, upload them, get the 500 error, stare at the logs, revert any changes I had made, and try again. It wasn't sophisticated, and I wasn't even writing any code, all I wanted was to get the damn thing to work. The fact that Perl was the language made no difference to or fro. It was packed with frustration, though.

The principle of surprise

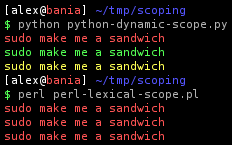

I've written code in Bash, C, C++, Haskell, Java, Pascal, PHP, Python, Ruby. So I feel like I've been around the block a few times, as far as choosing a language. And yet, Perl leaves me bewildered. One of the pillars of Ruby is something called "the principle of least surprise". What it means is that when you're not sure how to do something in Ruby, and you just do what seems most likely to work, it works. It's a wonderful quality, and it seems to be based on Perl, because Perl is the exact opposite.

Perl smacks horribly of apprenticeship culture. One where the novice is carefully guided through the valley of death, across the bridge over the pit of lava, past the nine-headed monsters, by a veteran monk. Send a tourist out there with a map and he's likely to be sent home in several pieces. Take a look at a common mistakes document and you find an immensity of pitfalls, not just in the language itself, but also from version to version.

This quality isn't merely at the fringes, it permeates the languages as a whole. Take arrays, a fundamental type in any language. Type print @arr and you get LarryWall, the elements of the array printed out. Type print @arr."\n" and you get 2<newline>. Que? Put an array in the context where a scalar is expected and you get the length of the array. Better yet, call a function like this: func(@arr, $arg3). Guess how many arguments the function has? Three. The array has been flattened and concated with $arg3. Yep, auto expanding arrays, ain't it grand? I like automation, but this is too automatic.

It's very common to get an integer where you expect to have a string. One common Perl function is chomp, it removes the trailing whitespace. I was getting an integer until I figured out it's a destructive function. This is another weird quality for a scripting language, you have lots of destructive functions that give integers as return values, as if you're doing syscalls in C.

I was stuck on one problem for hours because the value I was printing never made it to the screen. It turns out it was because of line buffering. So how do you flush the buffer? I looked for a flush function, there isn't one. Nah, you set the variable $|. Should have been obvious, but it wasn't.

Here's another one. Say you set a variable in a config module and read it in a code module. Now you want to rename it, how many places do you have to do that? The answer is four. 1) declaration, 2) use, 3) explicit export in config module with Exporter, 4) explicit import in code module. This is ridiculous.

A bag of hacks

When it comes right down to it, Perl is an amalgamation of hacks into a big, messy package. Granted, many of the syntactic hacks are useful, like qw(). Others are completely incomprehensible, like all the variables called $<nonalpha>. These all mean something: $_, $-, $+, $`, $', all related to regular expressions. $_, though, is used all over Perl to store "the thing you may want to have".

Despite all this syntax, there's none for declaring formal parameters to a function. It's just like Bash, pass in whatever you want, and then you read it out from an array. One common idiom is my ($var1, $var2) = @_. If you only have one variable you might be tempted to drop the parentheses and write my $var = @_, which will give you a fun bug (an integer), because now you're assigning an array to a scalar, not to another array.

Most (modern) languages have set a sane policy on pass-by-value vs pass-by-reference. And there are those with a foot in each camp, making the coder constantly second guess himself (thank you, C++). Perl, predictably, is conflicted about the issue. By and large you pass values around like you're in Bash, but pointers/references exist too. Here's a motivating example.. how do you pass two arrays to a function? Derm. You.. can't. When they come out the other end the arrays have auto-flattened and it's now one big array. So here's how: func(\@arr1, \@arr2). And in the function you say my ($arr1, $arr2) = @_. What the? Bear with me. Now you have two references. To dereference you go: @$arr1[2]. Same goes for %$ (hash) and &$ (func/closure reference). You sort of "wrap" the type being pointed to around the pointer. Needless to say, this syntax debauchery doesn't make code simpler.

It gets better. Perl's support for complex data structures is really... interesting. I was messing with an array of hashes once. And I needed to sort the records by a key in the hash. (Picture a table, click on the column name to sort by it, that sort of thing.) This might sound lame, but it's not obvious how to sort this. I spent hours on google and I found all sorts of examples, averaging about 15 lines. 15 lines to sort an array of hashes? What is this, C? I didn't understand them, I kept looking. Eventually I found an academic looking paper about the issue. It demoed various approaches, concluding with a 4 liner that was supposed to be the best, hurrah! The code is so incredible that I have to show you.

my @sorted =

map $_->[0] =>

reverse sort { $a->[1] cmp $b->[1] }

map [ $_, pack('C1' =>

$_->{"priority"}) ] => @unsorted;

Let me try to unobfuscate this. There's an array of hashes and we're sorting by the key called "priority", an integer value. In pack, we grab the value of "priority" for that particular hash and make a string of it (wtf). The map just outside pack does this for every hash. So what have we? A list of strings. We now run reverse sort (by decreasing integer value) on this. And the outer map is just a way of saying "take the existing array and replace it with this". I think. I still don't understand the details of this code. But imagine, convert all the keys you want to sort by to a frickin string and sort the strings lexically, then figure out which position every string went into and order the hashes accordingly. It's madness.

(Disclaimer: I was a total Perl noob when I was looking for this code, so maybe I missed something, maybe I explained it wrong.)

Somewhere in this madness someone decided to introduce a little order. It's become a standard for Perl code, you put this verse in your module: use strict. What it does is your code will no longer run (presumably the reason it's not on by default). It adds some static checking, so misspelled variable names no longer fail silently and that sort of thing.

Maddening syntax

Perl not only has syntax for "useful things", it has syntax everywhere. Like in PHP, the $ sign must accompany every variable always. Except if it's an array, then you use my @arr = (elem1, elem2), and my %hash = (key=>"value") for hashes. But then again, when you access and array element you write $arr[1], and same for hashes. So what the heck? You can also declare hashes through a reference, which goes my $data = {key=>"value"}. More syntax, more fun, right? Basically, it's @ for arrays, % for hashes, & for functions, and $ for... everything else.

And, of course, always remember my, because Perl thinks all variables should be global by default (wtf?).

I swear my fingers are more tired from Perl than any other language. There is so much syntax to type. At least it's tolerable when you have vim's tab completion set up. It's not "intelligent", so it doesn't filter out suggestions that don't fit the context, but it's good enough so you never have to type an identifier or keyword twice.

So why Perl?

Despite everything, in the end Perl is not such a horrible language. As the saying goes "you get used to everything", which implies than every language is usable, as you will eventually get used to it. The benchmark, then, should be on how long it takes you. And Perl is awful at first, terrible language to "try stuff out" in. Realize you have to change the type of a variable and because of the silly $/@/% syntax you have to run all around your code to change it. On the other hand, if you know what you need, then it's not as painful. And as I'm finding out, it doesn't take that long to adapt.

The nice thing about Perl is that it's so close to the shell, and a sort of power set of the shell. You have grep built-in, you have regular expressions as native as they get, you have various shell commands included, fast access (not necessarily easy access) to sockets, pipes, all the good stuff. It's nice to have thin wrappers around syscalls, sure as hell beats doing it in C. And heck, I like to break lines with . (concat) when printing strings, and because the ; is required I don't have to stress about it.

Beyond that, Perl has its place in the language ecosystem. Learning Perl is a way to understand Ruby, which is based, in part, on Perl. It's also a way to understand C, which Perl inherits from. That isn't to say using $_ in Ruby is a great idea, but now at least I know what it is when I see it.

And you know the blocks/closures that everyone loves about Ruby? They're from Perl. You can't write them as neatly, it is Perl after all, but I do quite like map { /$device on ([^ ]+)/ } $mount_data;

People obviously have their own criteria for picking a language. I've realized that perhaps the most important thing is how the language lets you manage data (or maybe I'm just saying that because I like Python?). Perl is definitely not a great choice here. It has good string handling, but once you get into multidimensional types (and I haven't even mentioned Perl's "object oriented" features) I run screaming back to Python.

I suppose Perl's chaos can in part be excused on historical grounds. After all, modern languages like Python and Ruby that don't have these problems had the benefit of Perl's example. But then again, Perl isn't the only older language still in use, but it does stand out as the most chaotic.

October 7th, 2008

October 7th, 2008