I addressed the issue of wordpress upgrades once before. That was a hacky home grown solution. For a while now I've been using git instead, which is the organized way of doing it. This method is not specific to wordpress, it works with any piece of code where you want to keep current with updates, and yet you have some local modifications of your own.

I addressed the issue of wordpress upgrades once before. That was a hacky home grown solution. For a while now I've been using git instead, which is the organized way of doing it. This method is not specific to wordpress, it works with any piece of code where you want to keep current with updates, and yet you have some local modifications of your own.

To recap the problem shortly.. you installed wordpress on your server. Then you made some changes to the code, maybe you changed the fonts in the theme, for instance. (In practice, you will have a lot more modifications if you've installed any plugins or uploaded files.) And now the wordpress people are saying there is an upgrade available, so you want to upgrade, but you want to keep your changes.

If you are handling this manually, you now have to track down all the changes you made, do the upgrade, and then go over the list and see if they all still apply, and if so re-apply them. git just says: you're using a computer, you git, I'll do it for you. In fact, with git you can keep track of what changes you have made and have access to them at any time. And that's exactly what you want.

1. Starting up (the first time)

The first thing you should find out is which version of wordpress you're running. In this demo I'm running 2.6. So what I'm going to do is create a git repository and start with the wordpress-2.6 codebase.

# download and extract the currently installed version

wget http://wordpress.org/wordpress-2.6.tar.gz

tar xzvf wordpress-2.6.tar.gz

cd wordpress

# initiate git repository

git-init

# add all the wordpress files

git-add .

# check status of repository

git-status

# commit these files

git-commit -m'check in initial 2.6.0 upstream'

# see a graphical picture of your repository

gitk --all

This is the typical way of initializing a repository, you run an init command to get an empty repo (you'll notice a .git/ directory was created). Then you add some files and check the status. git will tell you that you've added lots of files, which is correct. So you make a commit. Now you have one commit in the repo. You'll want to use the gui program gitk to visualize the repo, I think you'll find it's extremely useful. This is what your repo looks like now:

![]()

gitk is saying that you have one commit, it's showing the commit message, and it's telling you that you're on the master branch. This may seem odd seeing as how we didn't create any branches, but master is the standard branch that every repository gets on init.

The plan is to keep the upstream wordpress code separate from your local changes, so you'll only be using master to add new wordpress releases. For your own stuff, let's create a new branch called mine (the names of branches don't mean anything to git, you can call them anything you want).

# create a branch where I'll keep my own changes

git-branch mine

# switch to mine branch

git-checkout mine

# see how the repository has changed

gitk --all

When we now look at gitk the repository hasn't changed dramatically (after all we haven't made any new commits). But we now see that the single commit belongs to both branches master and mine. What's more, mine is displayed in boldface, which means this is the branch we are on right now.

![]()

What this means is that we have two brances, but they currently have the exact same history.

2. Making changes (on every edit)

So now we have the repository all set up and we're ready to make some edits to the code. Make sure you do this on the mine branch.

If you're already running wordpress-2.6 with local modifications, now is the time to import your modified codebase. Just copy your wordpress/ directory to the same location. This will obviously overwrite all the original files with yours, and it will add all the files that you have added (plugins, uploads etc). Don't worry though, this is perfectly safe. git will figure out what's what.

Importing your codebase into git only needs to be done the first time, after that you'll just be making edits to the code.

# switch to mine branch

git-checkout mine

# copy my own tree into the git repository mine branch

#cp -ar mine/wordpress ..

# make changes to the code

#vim wp-content/themes/default/style.css

# check status of repository

git-status

When you check the status you'll see that git has figured out which files have changed between the original wordpress version and your local one. git also shows the files that are in your version, but not in the original wordpress distribution as "untracked files", ie. files that are lying around that you haven't yet asked git to keep track of.

So let's add these files and from now on every time something happens to them, git will tell you. And then commit these changes. You actually want to write a commit message that describes exactly the changes you made. That way, later on you can look at the repo history and see these messages and they will tell you something useful.

# add all new files and changed files

git-add .

# check in my changes on mine branch

git-commit -m'check in my mods'

# see how the repository has changed

gitk --all

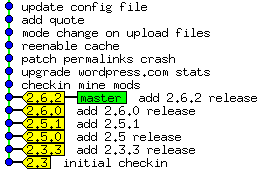

When you look at the repo history with gitk, you'll see a change. There is a new commit on the mine branch. Furthermore, mine and master no longer coincide. mine originates from (is based on) master, because the two dots are connected with a line.

![]()

What's interesting here is that this commit history is exactly what we wanted. If we go back to master, we have the upstream version of wordpress untouched. Then we move to mine, and we get our local changes applied to upstream. Every time we make a change and commit, we'll add another commit to mine, stacking all of these changes on top of master.

You can also use git-log master..mine to see the commit history, and git-diff master..mine to see the actual file edits between those two branches.

3. Upgrading wordpress (on every upgrade)

Now suppose you want to upgrade to wordpress-2.6.2. You have two branches, mine for local changes, and master for upstream releases. So let's change to master and extract the files from upstream. Again you're overwriting the tree, but by now you know that git will sort it out. ;)

# switch to the master branch

git-checkout master

# download and extract new wordpress version

cd ..

wget http://wordpress.org/wordpress-2.6.2.tar.gz

tar xzvf wordpress-2.6.2.tar.gz

cd wordpress

# check status

git-status

Checking the status at this point is fairly important, because git has now figured out exactly what has changed in wordpress between 2.6 and 2.6.2, and here you get to see it. You should probably look through this list quite carefully and think about how it affects your local modifications. If a file is marked as changed and you want to see the actual changes you can use git-diff <filename>.

Now you add the changes and make a new commit on the master branch.

# add all new files and changed files

git-add .

# commit new version

git-commit -m'check in 2.6.2 upstream'

# see how the repository has changed

gitk --all

When you now look at the repo history there's been an interesting development. As expected, the master branch has moved on one commit, but since this is a different commit than the one mine has, the branches have diverged. They have a common history, to be sure, but they are no longer on the same path.

![]()

Here you've hit the classical problem of a user who wants to modify code for his own needs. The code is moving in two different directions, one is upstream, the other is your own.

Now cheer up, git knows how to deal with this situation. It's called "rebasing". First we switch back to the mine branch. And now we use git-rebase, which takes all the commits in mine and stacks them on top of master again (ie. we base our commits on master).

# check out mine branch

git-checkout mine

# stack my changes on top of master branch

git-rebase master

# see how the repository has changed

gitk --all

Keep in mind that rebasing can fail. Suppose you made a change on line 4, and the wordpress upgrade also made a change on line 4. How is git supposed to know which of these to use? In such a case you'll get a "conflict". This means you have to edit the file yourself (git will show you where in the file the conflict is) and decide which change to apply. Once you've done that, git-add the file and then git-rebase --continue to keep going with the rebase.

Although conflicts happen, they are rare. All of your changes that don't affect the changes in the upgrade will be applied automatically to wordpress-2.6.2, as if you were doing it yourself. You'll only hit a conflict in a case where if you were doing this manually it would not be obvious how to apply your modification.

Once you're done rebasing, your history will look like this. As you can see, all is well again, we've returned to the state that we had at the end of section 2. Once again, your changes are based on upstream. This is what a successful upgrade looks like, and you didn't have to do it manually. :cap:

![]()

Tips

Don't be afraid to screw up

You will, lots of times. The way that git works, every working directory is a full copy of the repository. So if you're worried that you might screw up something, just make a copy of it before you start (you can do this at any stage in the process), and then you can revert to that if something goes wrong. git itself has a lot of ways to undo mistakes, and once you learn more about it you'll start using those methods instead.

Upgrade offline

If you are using git to upgrade wordpress on your web server, make a copy of the repo before you start, then do the upgrade on that copy. When you're done, replace the live directory with the upgraded one. You don't want your users to access the directory while you're doing the upgrade, both because it will look broken to them, and because errors can occur if you try to write to the database in this inconsistent state.

Keep your commits small and topical

You will probably be spending most of your time in stage 2 - making edits. It's good practice to make a new commit for every topical change you make. So if your goal is to "make all links blue" then you should make all the changes related to that goal, and then commit. By working this way, you can review your repo history and be able to see what you tried to accomplish and what you changed on each little goal.

Revision control is about working habits

You've only seen a small, albeit useful, slice of git in this tutorial. git is a big and complicated program, but as with many other things, it already pays off if you know a little about it, it allows you to be more efficient. So don't worry about not knowing the rest, it will come one step at a time. And above all, git is all about the way you work, which means you won't completely change your working habits overnight, it will have to be gradual.

This tutorial alone should show you that it's entirely possible to keep local changes and still upgrade frequently without a lot of effort or risk. I used to dread upgrades, thinking it would be a lot of work and my code would break. I don't anymore.

September 21st, 2008

September 21st, 2008