Chances are you use more than one computer in your daily/weekly/monthly routine. Probably not because you want to; it would be more practical for all of us to just have one place to store all our stuff, but alas.

A problem

Anyway, if you do then you know the pain of sitting down somewhere to not use your carefully set up and configured environment, dropped into some sub par default world that doesn't have all your personal goodies.

I've endured this for a number of years, until eventually I decided to fix it. Mind you, the reason there isn't a standard solution for this is that the problem has a rather large number of variables. But for this you need:

- a universal storage (http, ftp, etc)

- bash or zsh

The idea came up when I started fiddling around with zsh, trying to get a reasonable prompt working. Once I finally had, obviously I wanted the same environment that I do in bash already. And not knowing whether I'd love zsh enough to use it permanently, I also wanted the option to use bash whenever I might want to.

To complicate things a bit, I also like the option to have my prompt look slightly different depending on the host I'm logged into. One too many reboots when logged into a remote server (thinking I was rebooting the local machine) made me introduce that as policy. :D

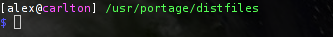

So my prompt looks like this, where the hostname always has a different color on every host. I also change the curdir to be red when I'm root to remind me of that fact.

After a lot of trial and error I eventually got my zsh prompt looking very similar.

Finally, there's the aspect that perhaps on some hosts you need some local settings as well, so one should make allowances for that. For instance, perhaps you want to set PATH or LD_LIBRARY_PATH on just one host.

A solution

All of these concerns produced the following files:

.zshrc

.zlogout

.zsh/functions/

.zsh/functions/prompt_numerodix_setup

.bashrc

.bash_profile

.bash_logout

.myshell/bash_local

.myshell/colors

.myshell/colors_zsh

.myshell/common

.myshell/common_local

.myshell/hostcolor_local

.myshell/zsh_local

The .bash* and .z* files are the usual stuff, of course. But everything common to bash and zsh is contained in .myshell/common, which is imported by both .bashrc and .zsh. That way we can share stuff between them. Further, everything called *local is local to the specific host, so these files are not distributed as part of the config update.

But the most useful bit is the pair of functions called pushcfg and pullcfg, in .myshell/common, which do the actual work of storing updates of these files in the central location. I use a website for this, but you could use some other easy-to-reach location just as well.

To see what happens, let's see the pullcfg function.

pullcfg(){

oldcwd=$(pwd); cd ~

wget http://www.matusiak.eu/numerodix/configs/cfg.tar.gz

gunzip cfg.tar.gz

tar xvf cfg.tar

rm cfg.tar

touch .myshell/common_local .myshell/bash_local .myshell/zsh_local .myshell/hostcolor_local

find .zsh | xargs chmod 700 -R

cd $oldcwd

}

So the tar file is downloaded and extracted, overwriting the existing files. Then all the local files are created empty (unless they already exist) so you don't have to remember their names.

So all you do is log into a host, download the file and unpack, then to update run pullcfg. And after that you should logout and login to see the effects.

But back to the prompt issue. A common problem with bash is that it has colors but there's no easy way to use them. The file .myshell/colors defines variable names for them, so everything related to colors is made much simpler. From there on, the prompt can be set quite simply like so.

PS1="${cbwhite}[\u@${host}\H${cbwhite}] ${cbgreen}\w \n${cbblue}$ ${creset}"

cbwhite means "color, bold, white", while cwhite means "color, white" and is the darker shade of the same color. Now, since zsh handles colors differently, .myshell/colors_zsh redefines these variables just for zsh, so that they work in both shells.

But what about changing the color of the hostname with every host? Glad you ask. The first time I extract the tar file on a new host, the prompt is not colored. This is to remind me that I haven't set a color for the hostname on this host yet. The hostname's color is defined in .myshell/hostcolor_local. So in the screenshot above it contains the line "cbmagenta". Once this file exists, and is non-emtpy, the prompt will become colored.

And so the whole thing works, with equally enough organization and flexibility to do just the right things. :)

Want to test drive? Download the tar file:

Of course, once the opportunity presents itself to migrate settings, I take advantage of that to also include .vimrc and whatever else is useful to have.

July 27th, 2007

July 27th, 2007