I know it's a strange question to ask. Technology is *meant* to be complicated, it solves complicated problems after all. But I wonder if there isn't a point where we feel it's becoming too complex to be manageable. I say "technology" when I really mean "software", because from a user's point of view, I think it's "technology" they feel they're dealing with.

Now, when I say "too complex" that needs to be qualified. It will always be possible to solve problems, but the more complex they are, the more time it takes. And if it gets to a point where it takes so much time that it's not worth it, well then that's going to be a dilemma.

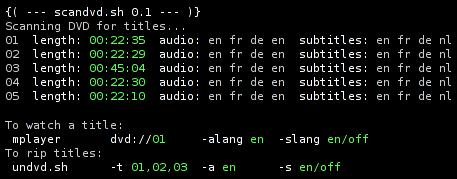

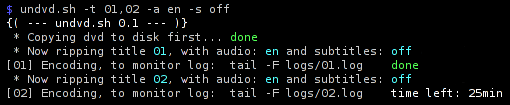

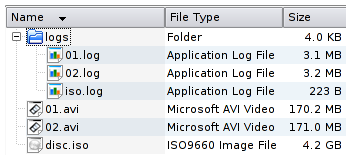

It's probably fair to say that users are pretty comfortable with software on their computer. It's a familiar environment, and while there are failures sometimes, those problems can be solved by you or "your geeky friend". Everything you need to fix the problem is found in your Emergency Kit, your DVD repository of software, fully legal software if you run open source. In the worst case, reinstall the OS and you're golden.

But since the advent of the internet, we have taken this one step further. "Technology" is all about hiding the complexities of the systems behind OK/Cancel dialog boxes. It's about making complicated things appear simple. And that's a wonderful achievement. The "early internet" *was* simple. Web and email are both remarkably simple technologies, there isn't a whole lot that can go wrong. And I think that to a common user, web and email are transparent enough to understand when it does fail. "Server not found, hm is my internet connection working? Is my network cable plugged in? Is the little light on my DSL modem lit?"

Yes, it does take some basic internet literacy, but we can handle it. But that was then. Right now we have firewalls and NAT adding a new, and highly frustrating, layer of complexity to our experience. When people ask me "how come I can't connect to the forum from home but it works from work?", you can never rule out an evil firewall. When you want to play online games, transfer files over instant messaging, use peer to peer or VoIP (skype) and so on, sooner or later you have to figure out how to route NAT and how to manage your firewall.

The present internet isn't as simple as the "early internet". Web and email are still around, but we also use a lot of other things. Among them - bittorrent. Now, bittorrent is somewhat of a wonder, it's a phenomenally functional technology. It "always" works. It's slower if your network setup is suboptimal, but it works well enough to keep us happy. And so bittorrent has achieved something very precious. It has successfully covered the complexity of the system behind a simple interface. Yes, bittorrent is complicated, noone would deny that. But do you even care? No, because you don't have to.

Contrast that with other technologies you use. Like.. skype. Keep in mind, skype is known to be a *successful* case in this context. But in my experience, it has basically *never* functioned on any of my computers as advertised. At best, I get mediocre sound quality with occasional transmission delays. At worst, it's completely unusable. And I even know how to set up my network.

So, the trend is to masquerade increasingly more complicated technology as "simple". Joel Spolsky calls this leaky abstractions, when something "simple" does not work, and fixing it tosses you into the deep end. There is a point where users are no longer able to help themselves. In the old days, cars were quite simple, so a lot of people would fix their own cars if the problem wasn't too serious. That happens less now, because cars are loaded with fancy technology, and even opening the sealed cover of an electronic steering system would void your warranty.

We want to do more sophisticated things on the internet, so we create technology that is increasingly complicated. When it fails, we're out of luck. Instant messaging is made to appear incredibly simple, but it's not. When you're trying to transfer a file to the person you're chatting with, there is a long chain of conditions that must be satisfied so that the transfer will work. Voice and video chat is more complicated. Video-on-demand-over-bittorrent (rumored to come in the near future) more complicated still.

Right now, it's quite difficult to debug problems for other people over instant messaging. It takes a lot of time and effort, there are many sources of failure. Some problems are never solved at all. I'm still able to debug my own problems, though, but with more and more layers added to every service, I wonder if there will be a time when I won't be able to do that either.

February 1st, 2007

February 1st, 2007